24-Cores and I Can't Play Games at Stable 60fps

Social Justice is using a $15K worthy computer but has worse gaming performance than a cheap BestBuy prebuilt. - Anonymous friend in a chat group

It was another terrible night, and I decided to play some games to relax after finishing some intense workloads. For a while, I’ve playing Descenders on my game console, but this time I am playing it on PC. I immediately noticed significant lags at some fixed interval (every 10 or 15 seconds.)

It’s almost standard for people to think about graphics setting issues that cause lags, and my first reaction was tuning down graphics settings though my card is pretty decent (NVIDIA RTX A5000.) However, stutter remains. The symptom suggested something more complicated. Enter performance profiler.

Windows Performance Analyzer

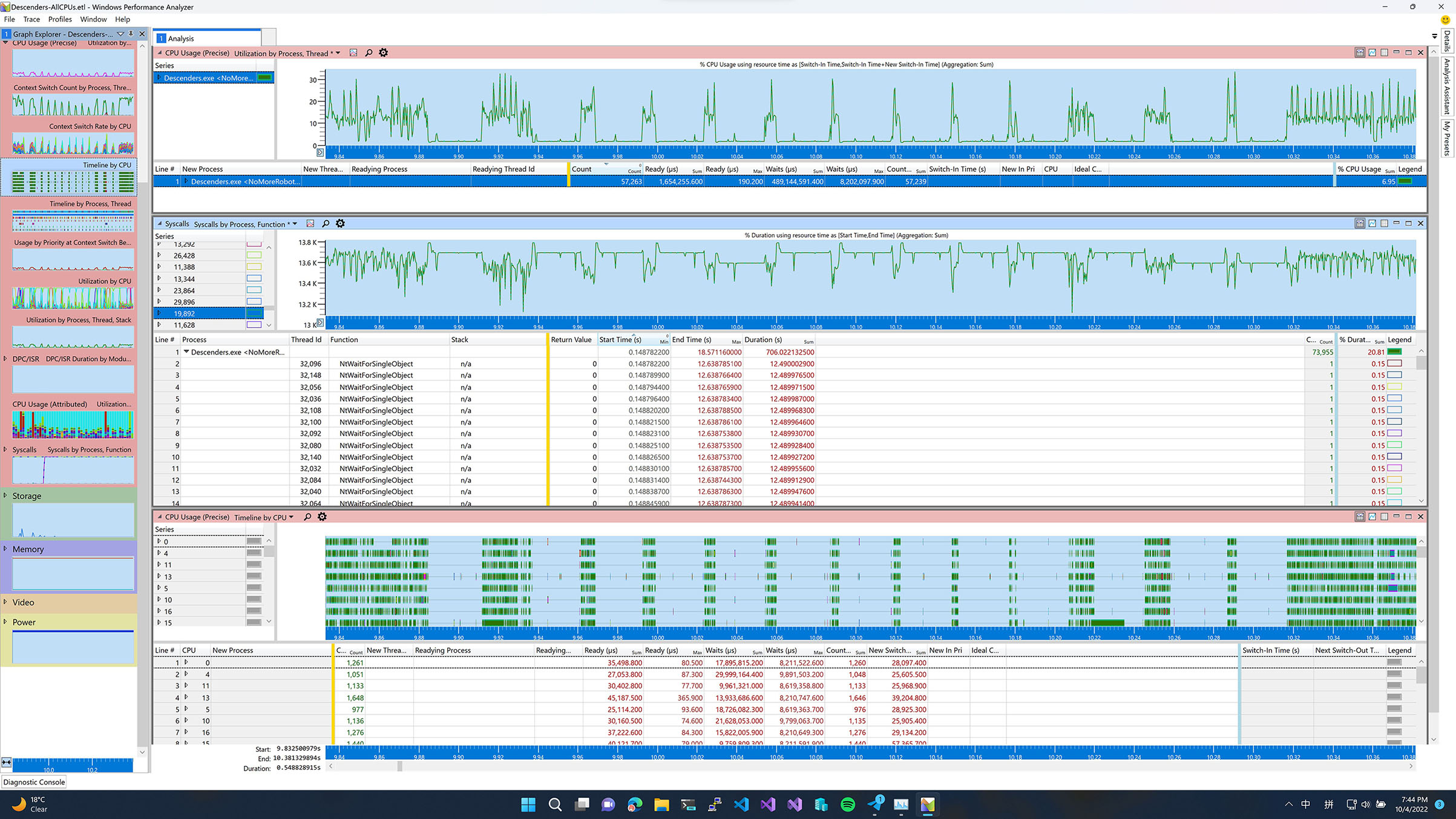

A good thing about Indie Games is they seldom come with DRM, so it’s an terrific opportunity to attach some profiler and figure out what happened. After recording the performance trace, something immediately comes to my mind: the CPU usage graph matches what I observed in the game.

I selected a section of the graph and a few other diagrams that can potentially help me investigate the issue. It went more interesting: I saw many NtWaitForSingleObject entries happening at each iteration of the lag, and the CPU usage timeline map indicated nothing intense was scheduled in such durations. They were essentially idling.

This leads to a hypothesis: one thread regularly took an exclusive lock, and other CPU threads stopped doing things and waiting for it.

Unity’s GC Implementation

Since Descender is a game powered by Unity, I shared my profiler data and initial discovery with friends with Unity Expertise in the gaming industry. He mentioned Unity’s Garbage Collection algorithm is vanilla Boehm which requires a global lock that will stall all threads during garbage collection. According to the explanation of Boehm Scalability, this is required due to:

- It causes the garbage collector to stop all other threads when it needs to see a consistent memory state.

- It causes the collector to acquire a lock around essentially all allocation and garbage collection activity.

Unity uses the standard Boehm GC algorithm regardless of the runtime choice.

AMD CPU Design at Scale

AMD has been known for its modular, scalable chiplet design since the launch of Zen, and of course, Threadripper Pro is not an exception (it’s essentially the binned Epyc.) My machine uses AMD Threadripper Pro 5965WX CPU. On 5965WX, there are 4 CCDs (each CCD has 6 cores enabled.) One downside of this design has a higher delay on any inter-core communication across the CCD boundary. An imprecise core-to-core latency test reports 120ns inside CCD (with some light load in the background) and 450ns+ across CCD boundaries.

Apparently, by the time Boehm was invented, no such CPU design existed, and such lock usage could negatively impact these split-brain designs significantly. Lately, I talked to a colleague about this, and he mentioned some production code we have also observed similar performance characteristics during our AMD migration test.

Interim Mitigation and Future Work

I just decided to pin the game to a single CCD, and the delay dramatically improved. I also reported the issue to the official Discord server (apparently the volunteer in server think the problem correlates to something else), and I hope this can be addressed soon.

start /affinity 0xFF Descenders.exe

There might be a few more things to try out (oh my TODO list):

- Write a mod to disable Garbage Collection globally.

- Advise their developers to use Incremental GC mode. I haven’t inspected the code to check what configuration they use, but it’s unlikely the Incremental one.

- Wait for Unity migrating to NativeAOT (which doesn’t use Stop-The-World GC) in a few years later :(

Acknowledgements

- I just realized someone complained the same issue on Hacker News a few years ago. Apparently there’s some mitigation approaches now but likely not fully taken in the game.

- Dark Kowalski, Delton Ding and James Swineson for the performance discussion.